CHI '26

CHI '26

Stars Without Steps: Bridging Observatory Access Based on Physical Proximity Through Educational XR

Human-Computer Interaction At TU Darmstadt

We Are A Collective Of The Following Labs:

07. – 09. April 2027 @ TU Darmstadt, Darmstadt, Germany

Click here for more information →Meet Our Team!

Max Mühlhäuser

Max Mühlhäuser

Christian Reuter

Christian Reuter

Florian Müller

Florian Müller

Jan Gugenheimer

Jan Gugenheimer

Markus Henkel

Markus Henkel

Katrin Hartwig

Katrin Hartwig

Franziska Schneider

Franziska Schneider

Carlos Böhm

Carlos Böhm

Nina Gerber

Nina Gerber

Hannah Krahl

Hannah Krahl

Sophie Glaab

Sophie Glaab

Dominik Schön

Dominik Schön

Jonas Wombacher

Jonas Wombacher

Yanni Mei

Yanni Mei

Kian Mirbagheri

Kian Mirbagheri

CHI '26

CHI '26

Stars Without Steps: Bridging Observatory Access Based on Physical Proximity Through Educational XR

CHI '26

CHI '26

From TikTok to Telegram: Cross-Platform Efficacy and User Acceptance of Erroneous and Flawless Misinformation Interventions

CHI '26

CHI '26

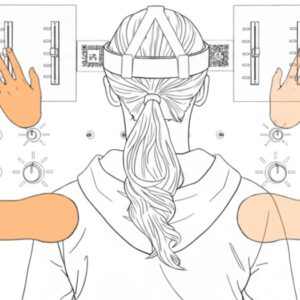

Do It Fast, Forget It Fast: How Timing and Limb Visualizations Affect First-Person Augmented Reality Instructions

CHI '26

CHI '26

Anticipation Without Acceleration: Benefits of Shared Gaze in Collocated Augmented Reality Collaboration

CHI '26

CHI '26

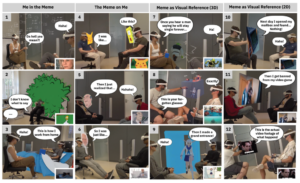

Meme, Myself and AR: Exploring Memes Sharing in Face-to-face Conversation using Augmented Reality

CHI '26

CHI '26

ShadAR: LLM-driven shader generation to transform visual perception in Augmented Reality

ToCHI Vol. 26

ToCHI Vol. 26

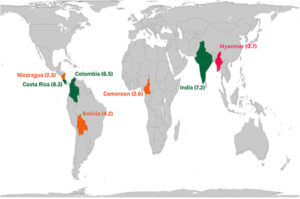

Activists’ Strategies for Coping with Technology-Facilitated Violence in the Global South (TOCHI-Paper)

The research groups behind #teamdarmstadt

The Telecooperation Lab (TK) at TU Darmstadt conducts research in the areas of human-computer interaction, computer-supported cooperative work, and ubiquitous computing.

The PEASEC Lab investigates the intersection of privacy, security, and human-computer interaction, focusing on usable security and privacy.

The HCI Lab at TU Darmstadt explores novel interaction paradigms in augmented and virtual reality, with a focus on extended reality and embodied interaction.

The Urban Interaction Lab researches human interaction in urban environments, exploring smart city concepts and public-space computing.